Why AI use case prioritisation fails — before it starts

Most organisations that seriously adopt AI share the same problem: too many ideas, too little clarity. Workshops produce dozens of use-case candidates — from automated invoice checking to an AI-assisted product catalogue. AI use case prioritisation then fails not for lack of will but for lack of method: every idea is lumped together as “promising”, no business unit wants to be left behind, and the loudest argument wins instead of the clearest number.

The result: a pilot launches because the idea is technically exciting — not because it creates value. Twelve months later the budget is gone, team acceptance is damaged, and the next AI budget request fights the memory of the last failure. Structured prioritisation is not bureaucratic overhead — it is the assurance that the first AI project also builds the case for the second.

The four assessment dimensions of robust prioritisation

A defensible scoring model for AI use case prioritisation works with four dimensions that together form a complete picture. Each dimension is scored on a scale of 1 to 5 — weightings can be adjusted according to organisational maturity.

1. Value potential: How large is the measurable benefit — in euros of effort saved, revenue uplift or reduced error risk? This is not wishful thinking but a conservative estimate based on existing process KPIs. A use case without a baseline cannot be prioritised.

2. Feasibility: How complex is technical and organisational delivery? Factors include the system landscape, interface change effort, skills required and third-party dependencies. A high-value use case that presupposes three legacy migrations is not a quick win.

3. Data availability: Are the necessary data available in sufficient quality, volume and accessibility? Missing or poorly structured data are the most common cause of project delay — and should be priced into prioritisation explicitly as risk, not treated as a late implementation problem.

4. Strategic fit: Does the use case support the organisation’s stated priorities for the year? An AI project pursued internally while leadership prioritises cost excellence will struggle for budget and sponsorship without an explicit strategic anchor — regardless of technical potential.

From scoring to roadmap: the four-phase process

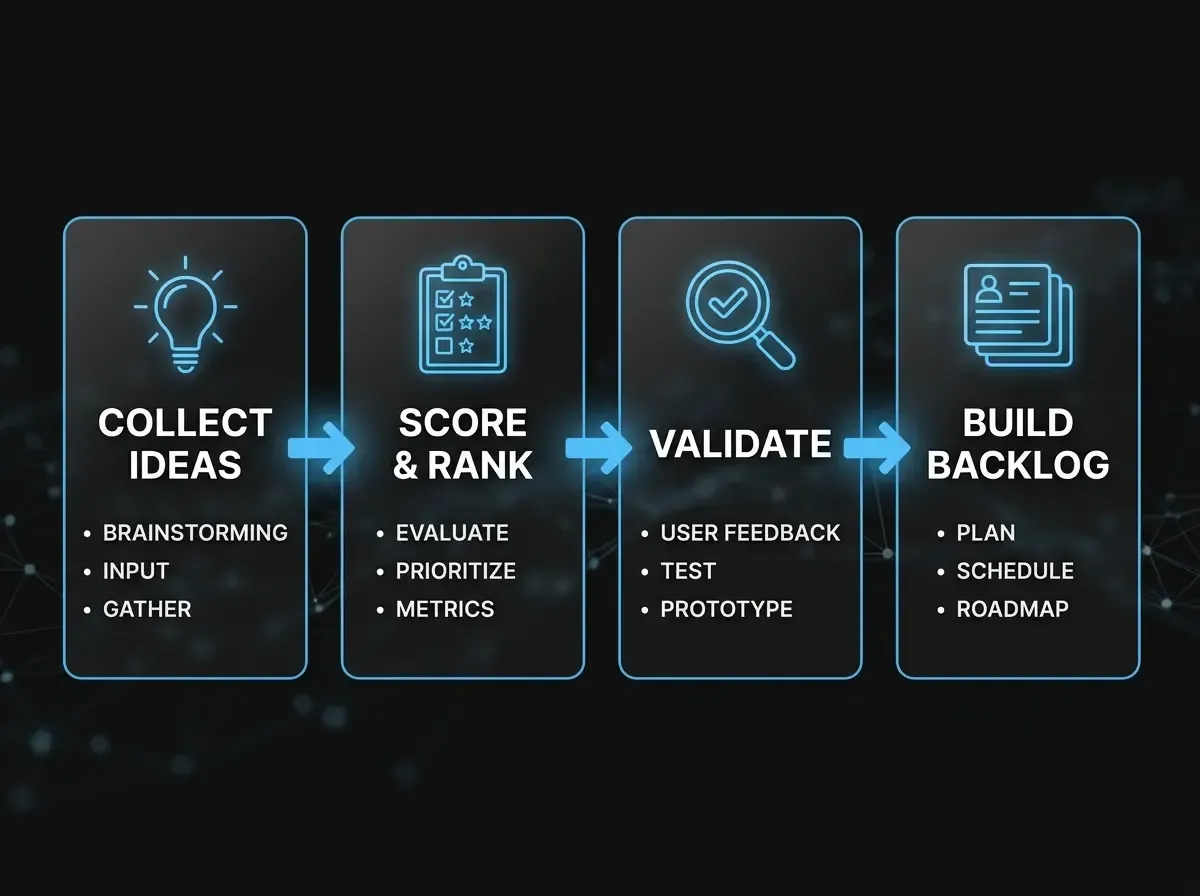

A scoring model alone is not enough — you need a process that ensures the right people are involved at the right time. A four-phase approach that can run through in four to six weeks has proved effective.

Phase 1 — Collect ideas: Each business unit nominates at most five use-case candidates using a standard brief (process name, pain point, expected outcome, data source). The cap of five forces internal pre-prioritisation and stops technically driven wish lists from flooding the list.

Phase 2 — Assess and score: A cross-functional team of IT, the business and a neutral facilitator scores each candidate on the four dimensions. Scores are documented explicitly, including rationale for extreme values. Consensus is not the goal — transparency of assumptions is.

Phase 3 — Validate: Top candidates are checked in short validation sprints (max. two weeks) for technical feasibility and data quality before resources are committed. A use case that convinces the scoring board but fails on data reality should not enter the roadmap.

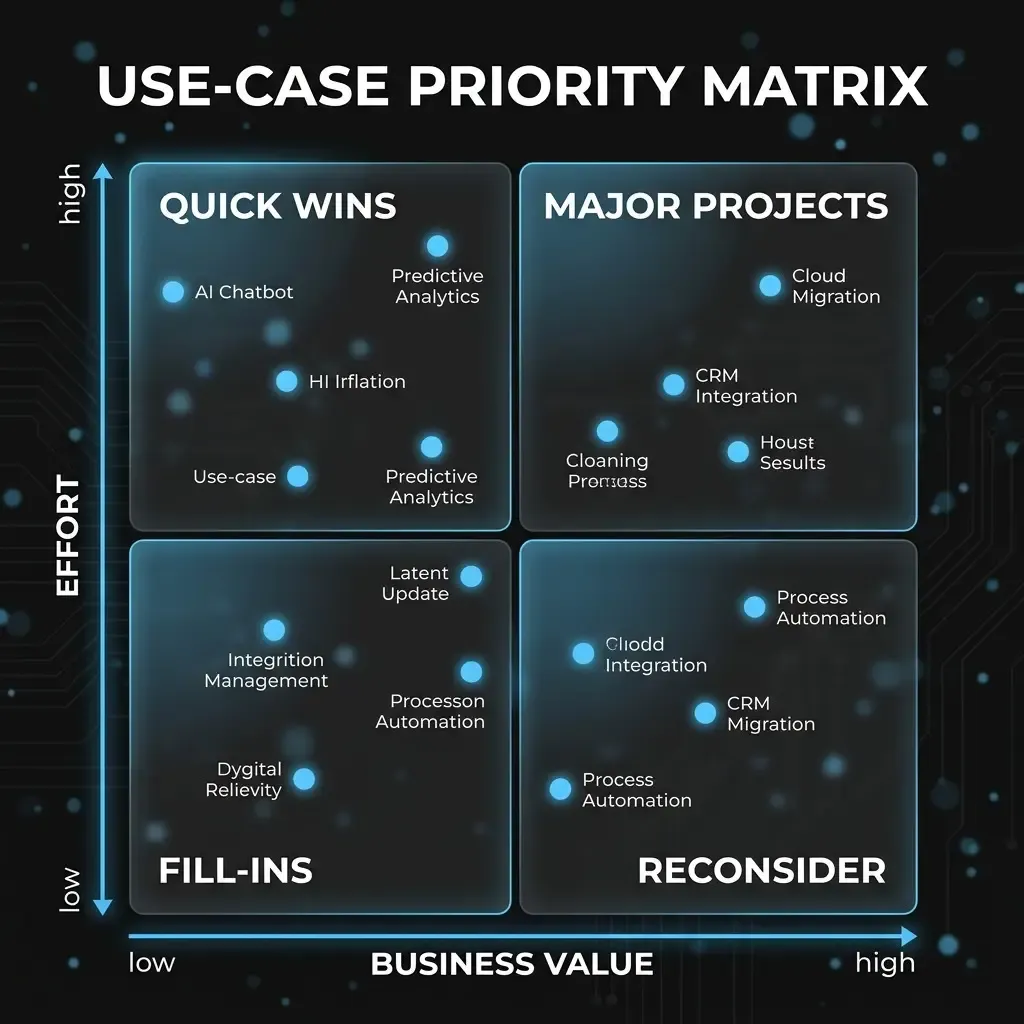

Phase 4 — Prioritise and govern: The output is a use-case backlog in three groups: “Start now” (quick wins), “Prepare next quarter” (strategic projects with strong ROI) and “Park” (good ideas, poor conditions). The backlog is reviewed quarterly — not annually.

Quick wins: the most important lever for AI momentum

An often underestimated aspect of AI use case prioritisation is identifying quick wins — use cases with high value potential and low implementation effort. They are not only financially attractive; they are the main lever for organisational momentum. A quick win that delivers a measurable outcome in eight weeks shifts the internal AI conversation: from “whether AI” to “which AI next”.

Typical quick-win patterns in mid-sized companies include automated document classification in finance (high repetition, clear rules), AI-assisted reply drafts in customer service (existing ticket history as a training base), and internal FAQ systems built on existing knowledge bases. None of these requires an AI research team — they require structured source data. That is the real preparatory work.