Why an AI strategy portfolio is essential for leadership teams

Many organisations start their AI journey with a handful of promising ideas: automated customer dialogue here, intelligent document analysis there, perhaps an internal helpdesk chatbot. Without a structured frame, that often turns into budget competition, duplicated work, and stalled rollouts. An AI strategy portfolio for leadership teams provides the missing structure: it turns a collection of ideas into a steerable programme.

Portfolio thinking is established in product development and investment. Applied to AI, initiatives are assessed in relation to each other — by strategic fit, resources, dependencies, and expected effect. The result is not a rigid list but a living instrument that evolves with corporate priorities.

Anyone who has seen an AI pilot fade after six months for lack of prioritisation knows the core issue: it is rarely a lack of ideas — it is a missing mechanism that decides which idea gets attention when — and which waits.

Four phases of an AI strategy portfolio

A working portfolio runs through four distinct phases. None is one-off — all repeat on a cycle, ideally quarterly.

Phase 1: Ideation & screening

Start with a structured idea intake from relevant business units. Not every idea must come from a workshop — operations teams often have the sharpest problem statements. Capture each idea in a standard one-pager: problem, affected process, rough solution, expected impact, data availability.

Screening here is deliberately non-technical: filter out candidates with no usable data or clear regulatory blockers. What passes enters formal scoring.

Phase 2: Scoring & prioritisation

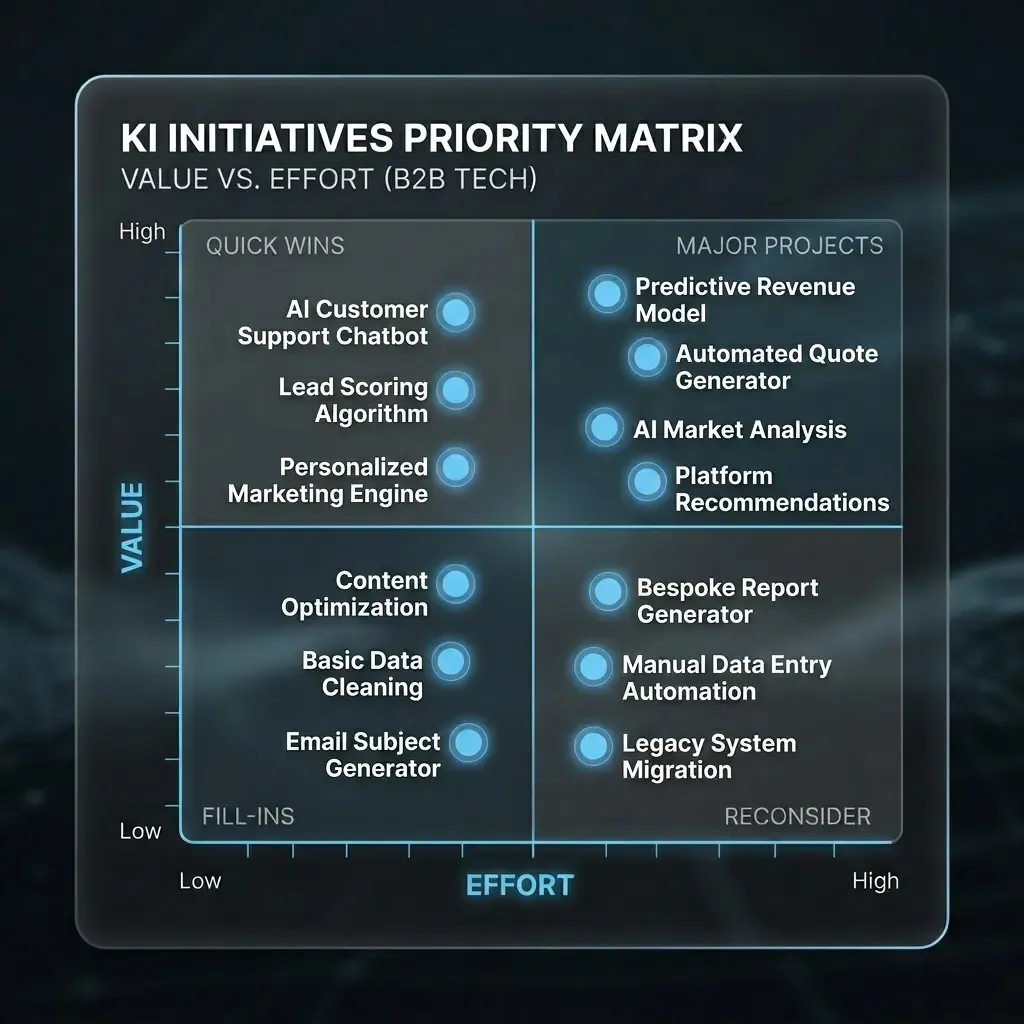

Score against four weighted criteria: strategic fit, expected value, implementation effort, and data & governance readiness. The output is a portfolio matrix: high-value / low-effort quick wins enter the roadmap first; high-value / high-effort bets need dedicated resourcing; low-value ideas are parked, not discarded.

The matrix makes trade-offs visible — which is the point.

Phase 3: Roadmap & resources

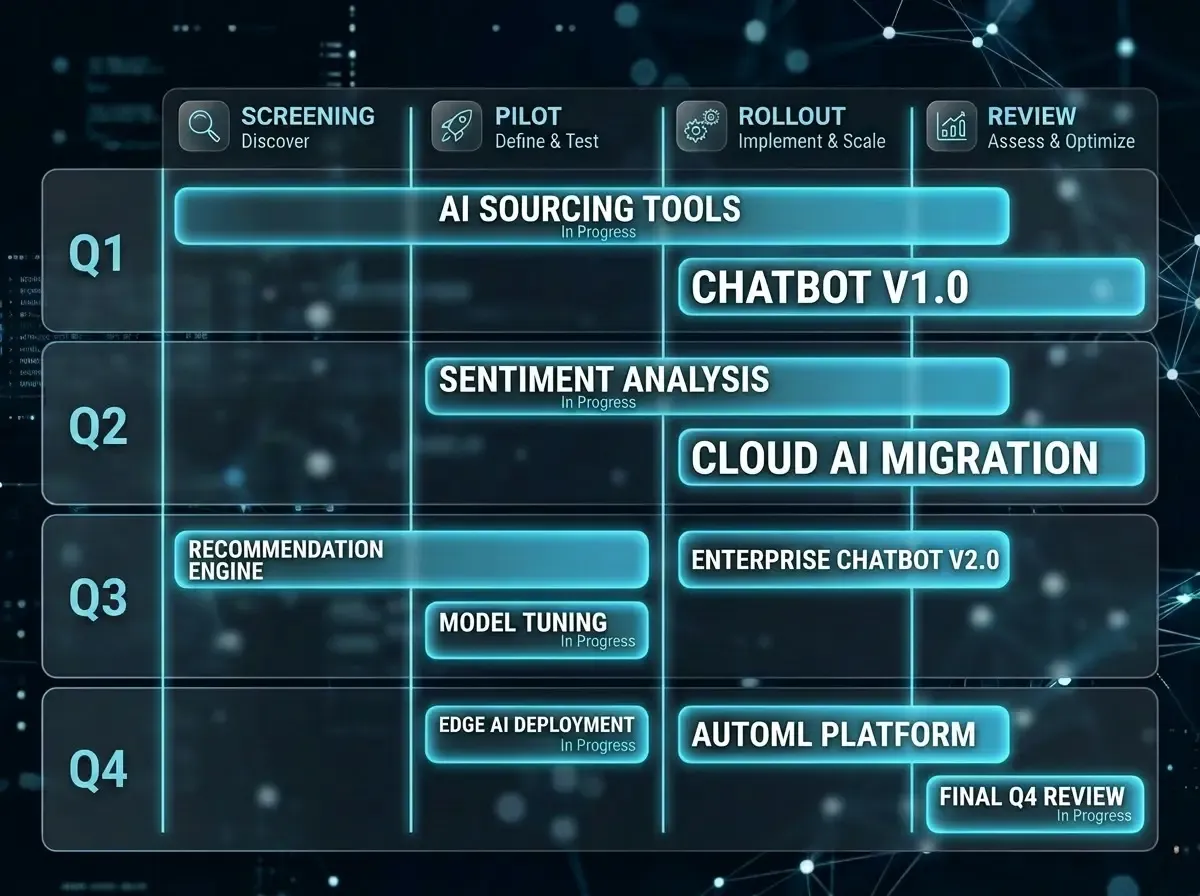

Every initiative needs a business owner — none without clear accountability. Spell out IT, data, and operations needs: which teams are involved? which partners? what bottlenecks could block other work?

Structure the roadmap in three horizons: active (in delivery), next wave (ready next quarter), and backlog (scored but not yet started). That prevents too many parallel starts that slow each other down.

Phase 4: Review & adjustment

Quarterly reviews are for course correction, not blame: which initiatives hit their goals? what changed — regulation, market, technology? what moves from backlog to next wave? Keep the session under two hours; if it runs longer, you lack data, not discussion.

Common mistakes — and how to avoid them

The most frequent failure mode is no dedicated portfolio owner. A committee alone is not enough — someone must own follow-ups, escalations, and data quality between meetings. In mid-market companies that is often a CDO, CTO, or experienced programme lead with executive access.

Another trap: over-scoring in phase two. Eight criteria on a ten-point scale wastes more time than it saves. A simple three-point scale across four dimensions usually produces more consistent decisions than an elaborate model you rewrite next quarter anyway.

Finally, do not treat governance as an afterthought. Data access, GDPR, and role assignments belong in scoring — initiatives with gaps here cost more in delivery and cause the delays a portfolio is meant to prevent.