Why enterprise AI maturity protects against mis-investment

Ask leaders today about their AI plans and you rarely hear “We are not ready yet”. Instead you hear about pilots “just starting”, tool evaluations “nearly complete”, or AI budgets “approved next quarter”. The issue: in many of these organisations the foundations that would let a pilot deliver credible results are missing.

An organisation’s AI maturity is not an academic idea — it is the variable that decides whether an AI initiative is in production after six months or disappears as an “interesting experiment” in a slide deck. Leaders who know their maturity invest more deliberately, pick the right entry points and avoid the classic mistake: too much technology, too little foundation.

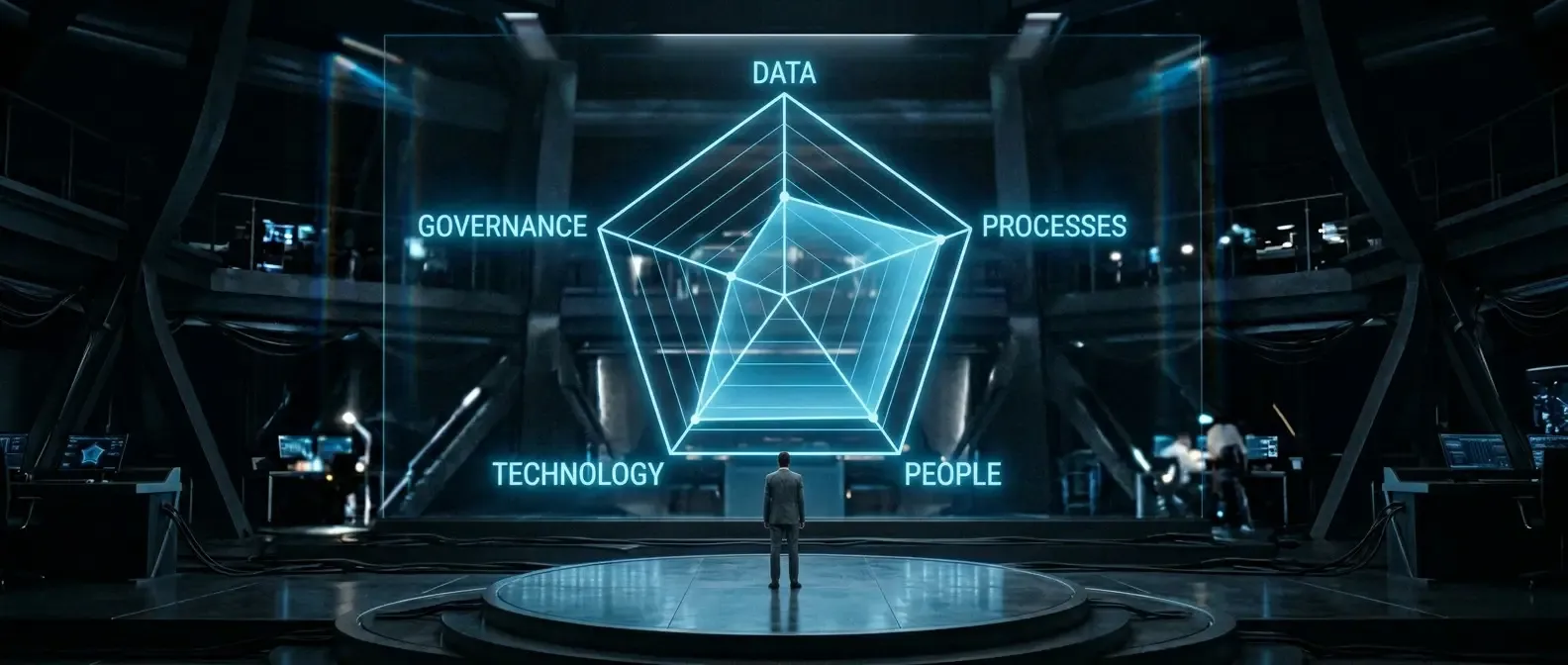

The five dimensions of AI maturity

No single indicator captures AI maturity fully. A model that scores five equally weighted dimensions has proved effective — each on a scale from 1 (not present) to 5 (fully embedded and continuously improved).

1. Data quality and availability

AI models are only as good as the data behind them. At levels 1–2, data lives in silos: spreadsheets, PDFs, proprietary systems without APIs. From level 3 onwards, central sources exist with defined quality and clear ownership. Key questions: Who owns data quality? Which processes are still paper-based? How old are the datasets you last relied on?

2. Process maturity and documentation

AI automates processes — but only when those processes are clearly defined. Where core workflows live mainly in experienced people’s heads, AI tools will achieve little. Where processes are documented with clear triggers, decision points and outputs, you can already automate individual steps at level 3. Test: could you hand a new starter your most important business process in writing within two hours?

3. People, skills and AI acceptance

Technical maturity alone is not enough. AI projects often fail not because of the technology but because the people who must use the systems daily were not brought along. That starts with basic understanding (“What is a language model?”) and extends to co-designing AI-assisted workflows. An honest view of team readiness is not a “soft” factor — it is a hard predictor of success.

4. Technology foundation and integration

Which systems are in use? Are there APIs? Do core systems still run on-premise without integration options? Organisations with modern cloud ERP or CRM systems have a clear advantage here — not because cloud is inherently better, but because modern systems offer standard interfaces. The decisive question: can a new AI tool be plugged into the existing stack without triggering a six-month IT project?

5. Governance, compliance and risk

In DACH organisations the governance dimension is often underestimated. Who may feed which data into a language model? How are AI decisions recorded and explained? Are there clear escalation paths when an AI system is wrong? Without answers, you operate in a regulatory grey zone — especially in sectors with strict compliance (finance, pharma, health).

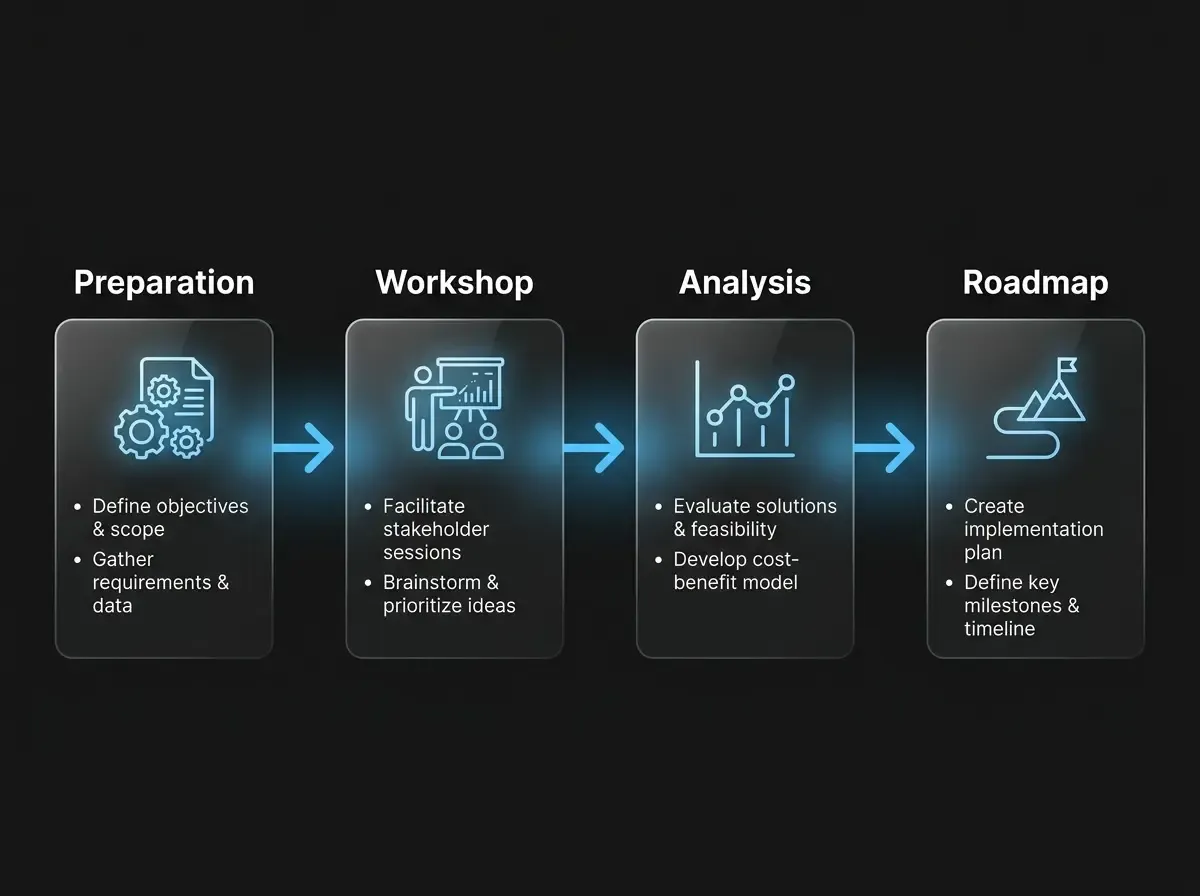

How to run the assessment in practice

The self-assessment is not an IT project — it is a leadership conversation. In practice three to four hours with the right stakeholders is enough: one each from executive management, IT, the business and — where available — legal or compliance. Each of the five dimensions is scored in a short structured round. The output is not a scoring system that produces league tables but a discussion record with concrete gaps and strengths.

Important: scores in these sessions often diverge. IT may rate database infrastructure as “good” while sales leadership still stitches reports from three systems every month — same reality, different scores. That discrepancy is valuable: it shows where perceptions differ and where action is needed.

What follows the assessment

The outcome of an honest maturity assessment is rarely “Let’s launch the big AI transformation now”. More often it is: strengthen one or two dimensions before wider initiatives. Typical patterns in mid-sized companies:

- Data is ready, governance is missing: AI tools are already in use but without policies or access control. First step: a simple usage policy and risk classification for data categories.

- Processes documented, technology lags: Clear workflows exist but the ERP is dated. Here a targeted pilot with an external API layer can help before core system migration.

- Technology in place, skills lag: Modern cloud infrastructure but few AI-capable people. Internal enablement is the strongest lever — before rolling out expensive tools nobody uses.

AI maturity is neither a certificate nor an endpoint. It is a tool for an honest baseline — and thus the foundation of any AI strategy that is more than inward-facing marketing.