Why most AI initiatives stall in the evaluation phase

Many mid-market companies have spent the last two years evaluating AI. Workshops ran, demos happened, budgets were earmarked in the annual plan. Yet in a surprising number of organisations, not a single AI system is in production. The reason is rarely missing technology or budget — it is almost always missing structure: there is no credible AI roadmap for the enterprise that sets priorities, clarifies ownership, and describes a concrete path from idea to live operation.

There is also a typical pattern: leaders wait for the “right moment” — more market clarity, better models, cleaner data. That moment does not arrive. If you are still waiting in two years, you will still be waiting in four. Companies running credible AI systems today did not wait for perfection. They started in a structured way — with a clearly bounded entry point, consistent support, and the willingness to learn from the first pilot.

The enterprise AI roadmap: three phases with clear outcomes

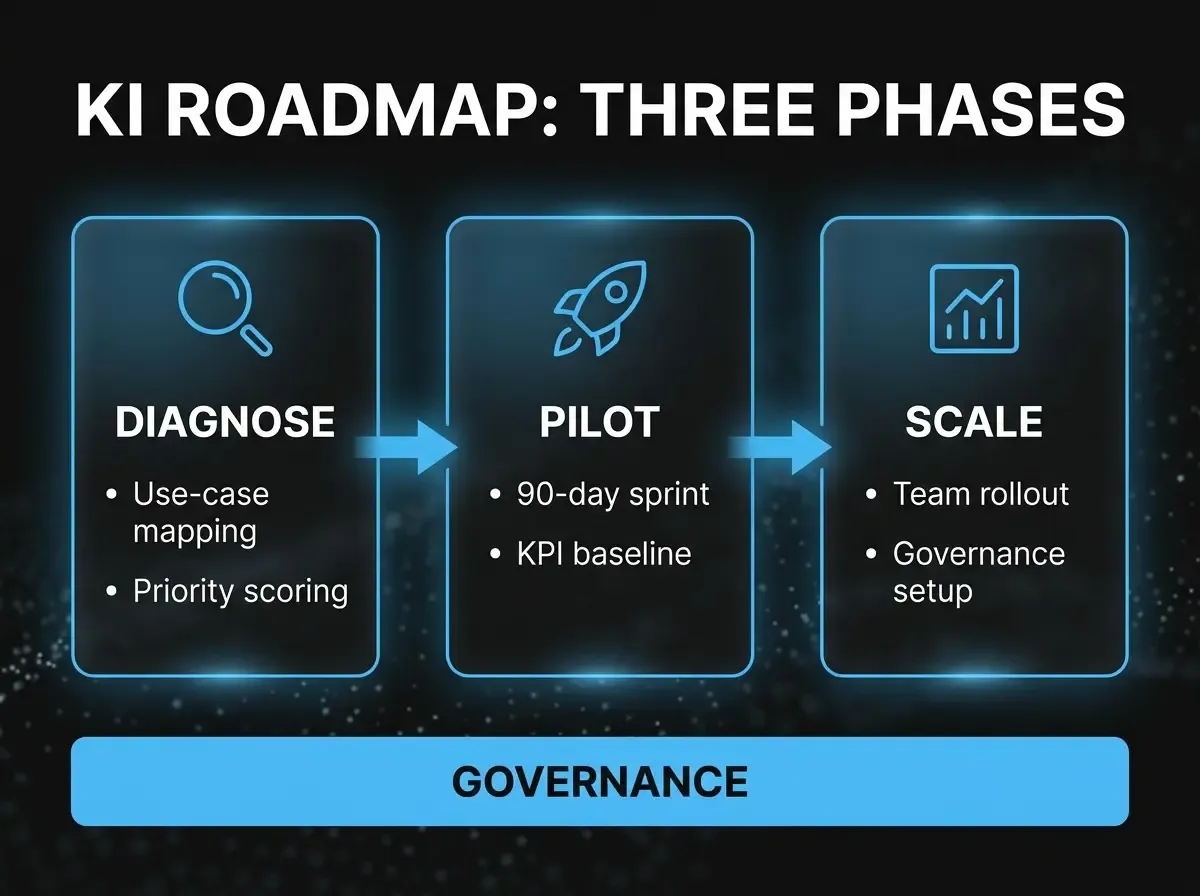

A practical AI roadmap breaks into three sequential phases. Each has a defined goal, concrete deliverables, and a realistic timeline. Transitions between phases are decision gates — not automatic handoffs.

Phase 1: Diagnosis & prioritisation (4–6 weeks)

The goal of this phase is not to deploy AI — it is to understand where AI actually moves the needle. That sounds obvious; it is not: we regularly see organisations evaluate tools before they know what problem they are solving. The diagnosis phase turns that order around. Three questions sit at the centre: which processes are highly repetitive with clear data foundations? Where are error or delay costs especially high? And where is organisational readiness strong enough for a first pilot?

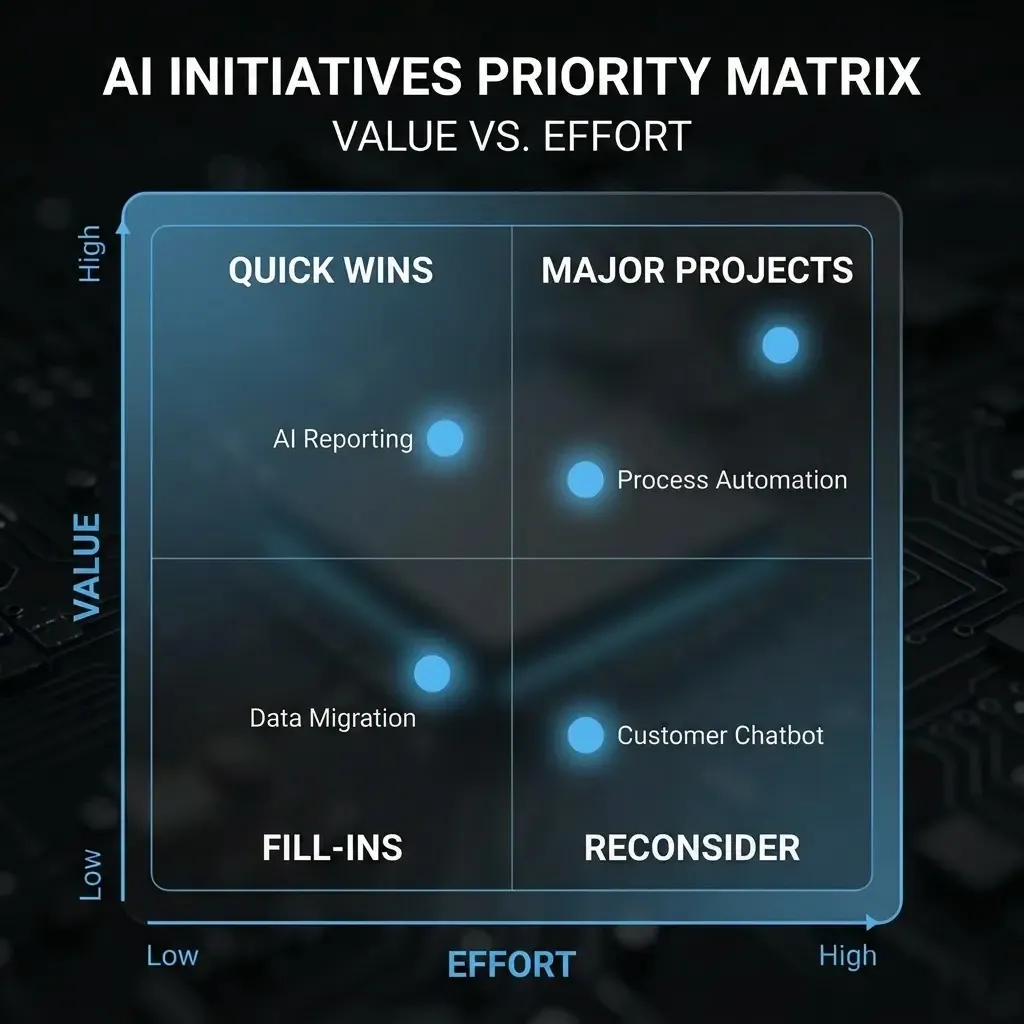

The output is a prioritised list of two to four use cases, scored by impact potential, technical feasibility, and organisational effort. Not every use case suits a first wave — some are strategically valuable but too heavy operationally. The diagnosis phase makes that distinction explicit.

Phase 2: Controlled pilot (8–12 weeks)

The pilot is the heart of the roadmap — and the most underestimated element. Common mistakes: scope too wide, too many teams involved, success criteria unclear. A good AI pilot is tightly bounded: one use case, one department, measurable baseline metrics before day one. The pilot runs alongside day-to-day operations, not instead of them. If you must restructure operations for the pilot, the scope is too large.

What drives success is test design: what do we measure? how do we compare to the status quo? who assesses AI output quality — and against what criteria? These questions must be answered before day one, not after the pilot ends. After 8–12 weeks you should not have a final verdict on “AI in general”, but a sound basis to scale or stop this specific use case.

Phase 3: Scale & governance (ongoing)

When the pilot succeeds, the harder work begins: moving into production. That means not only technical integration but process changes, training for affected teams, and clear governance structures. Skimp here and you pay twice later: fixing errors from weak controls, and rebuilding trust lost through poor user experiences.

Governance from day one: not an add-on, but the foundation

The line we hear most often in first conversations: “We will sort governance when it gets concrete.” That approach has a fatal flaw: by the time it is concrete, it is usually too late for clean governance architecture. If you retrofit privacy, access control, and approval workflows in live operations, you fight entrenched habits — and often lose.

Governance in the AI roadmap means: who may feed which data into a language model? how are AI outputs logged when they inform decisions? what happens when an AI system is wrong — and who is accountable? These questions can be settled in hours early on, without operational pressure. They are hard to answer in a structured way once systems are live.

For regulated industries — pharmaceuticals, finance, healthcare — documented governance is not optional best practice but an increasingly regulatory requirement. The EU AI Act, phased in over time, classifies AI systems by risk tier and demands extensive documentation and controls for high-risk applications. Knowing those requirements from the outset avoids costly rework.

What an AI roadmap cannot do — and should not try

A roadmap is not a promise. It is a structured plan based on what you know today — and it may change when the pilot delivers new insight. Treat an AI roadmap as rigid annual planning and you will fail: AI adoption is iterative, not a waterfall project.

At the same time, a roadmap is not a cure for organisational ambiguity. If fundamental questions about process ownership, data strategy, or digital infrastructure are unresolved, an AI roadmap does not fix them — it surfaces them, which is valuable but must not be confused with the roadmap itself.

The right stance is pragmatic: a roadmap gives direction and commitment — but stays a living document, reviewed after every milestone and adjusted as needed. Companies running credible AI today almost uniformly say their original roadmap was heavily revised after the first pilot. That is not failure — that is the point.