The adoption gap: why corporate LLM employee adoption so often fails

The investment is made: an internal language model is configured, the RAG pipeline is running, single sign-on is in place. The rollout date arrived, the announcement email went out — and three months later the usage dashboard shows sobering numbers. Daily active users: 17 out of 180. This is not an isolated case. It is the typical pattern when corporate AI systems are deployed across mid-market organisations.

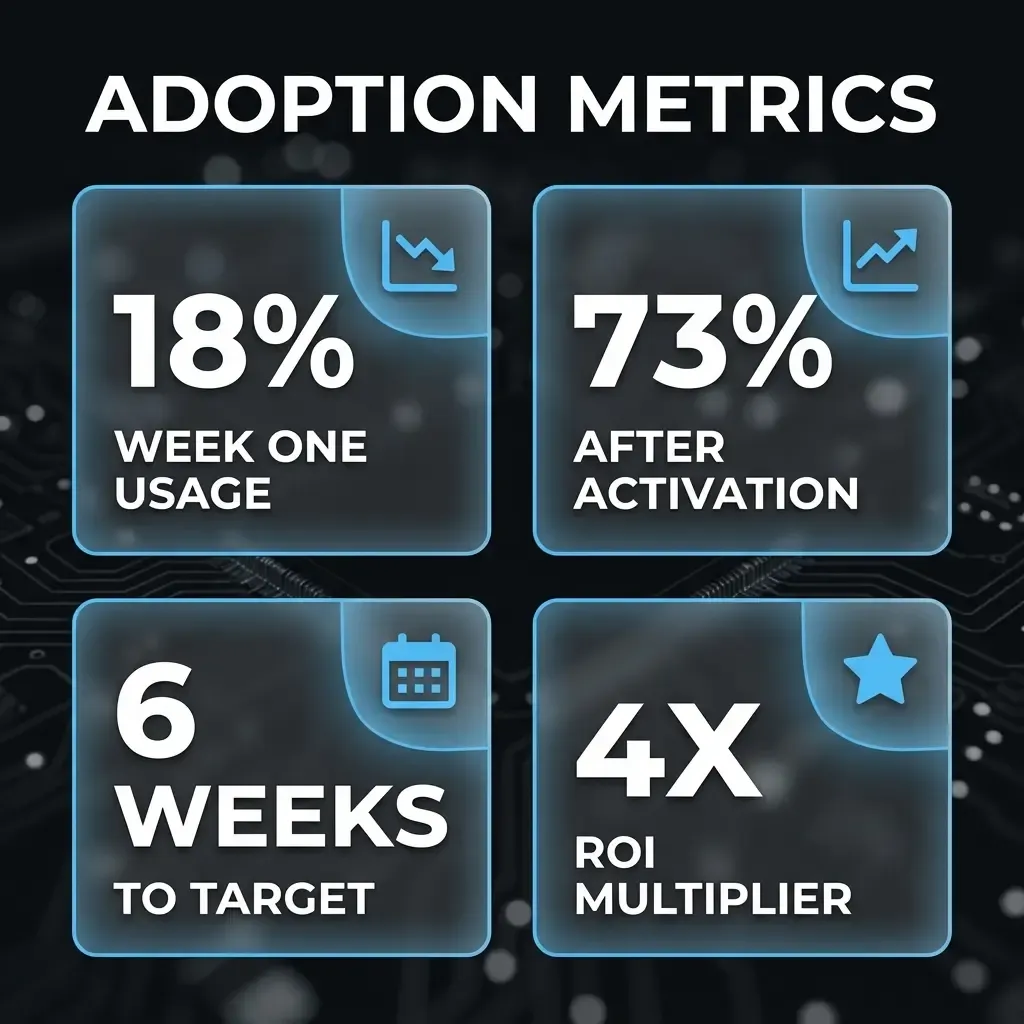

The good news: corporate LLM employee adoption is not down to chance or organic momentum. It is a controllable process. Organisations that build a structured adoption strategy alongside the technical implementation achieve sustained usage rates of 60 to 80% — within weeks of go-live. The difference lies not in the product but in the process.

The five most common adoption killers

Before you can course-correct, you need to understand why employees don't use an available tool. Across numerous LLM deployment projects, five patterns repeat reliably:

1. No day-to-day relevance. The tool was announced, but no one demonstrated which specific problem it solves for this department, this workflow. Employees open it once, find no obvious entry point, and return to familiar ways of working.

2. Fear of job loss and loss of control. Nobody voices this fear openly in a team meeting. But it is there — diffuse, latent, and behaviour-shaping. Someone who suspects that writing a good prompt reduces their value to the organisation will avoid the system.

3. No leadership presence. When senior management or direct line managers don't visibly use the LLM themselves, the message is clear: it is optional. Priorities are set not by announcements but by behaviour.

4. Training that doesn't train. A 45-minute session with generic ChatGPT examples builds no competence for the organisation's own instance. Employees need task-specific exercises using real data from their own work context.

5. No measurable success, no feedback loop. If nobody tracks whether the tool is being used and no wins are communicated, there is no social signal and no positive reinforcement. The system fades from awareness.

The figure above illustrates what structured adoption measures achieve in practice: after a typical first-week usage rate of 18%, targeted activation measures push the rate above 70%. What matters is not the quality of the AI responses but the quality of the human support during the rollout phase.

The three-phase model for successful corporate LLM employee adoption

A proven framework for LLM adoption in organisations works in three sequential phases: Assess, Activate, Anchor. Each phase has clear objectives, responsibilities and success metrics. Crucially, the phases must be completed in sequence — skipping Phase 1 and going straight to rollout recreates the same familiar failures.

Phase 1 – Assess: Where do we actually stand?

Before rollout comes an honest baseline review. Which departments have the most acute information needs? Which workflows are documentation-heavy, knowledge-dependent or repetitive? And — critically — which individuals in the organisation are early adopters willing to try the system first and share their experiences?

This phase also surfaces the workforce's fears and concerns proactively — not through an anonymous survey that disappears into a void, but through structured short interviews with team leads. The insights feed directly into the communications strategy: which concerns need to be openly addressed before the first training day takes place?

Phase 2 – Activate: Make usage visible

The activation phase does not start with a company-wide rollout. It starts with a pilot team of 8 to 15 people from different departments. They receive intensive support: short daily check-ins during the first two weeks, task-specific prompt workshops, and a dedicated Slack or Teams channel for questions and wins.

The pilot team serves two functions. First, it delivers substantive feedback on the quality of LLM responses in real task contexts — information that is essential for further fine-tuning or RAG optimisation. Second, it becomes the internal champion network: colleagues who have experienced real wins and report them in team meetings carry more persuasive weight than any management communication.

Phase 3 – Anchor: LLM as a standard tool

Adoption is only sustainable once the LLM is no longer perceived as a novelty but as a natural work tool — as obvious as email or the ERP system today. Embedding it at that level requires structural measures: integrating the LLM into existing process documentation, including it in onboarding materials for new employees, and regularly communicating KPI developments to all stakeholders.

Maintaining the champion community beyond the rollout phase is equally important. Monthly exchange formats where new use cases are shared and problems solved together keep usage intensity high and prevent the system from falling into disuse six months down the line.

Which KPIs actually matter

Adoption can be measured — and should be. The metrics that count are not monthly active users (which can easily be inflated by nudges) but depth of usage: how many interactions per session? How many follow-up prompts? How many users return on day three, day seven, and day thirty after their first session?

Qualitative signals are equally telling: are LLM-generated documents being presented in team meetings? Are team leads reporting time savings spontaneously? Are new use-case requests arriving from business units? These are early indicators of genuine anchoring — long before the headline ROI numbers become visible. Tracking them regularly allows you to course-correct early where a department is falling behind and to scale successes where they are already visible.