Why the matrix must not be a paper exercise

Many companies have adopted an AI usage policy: which tools are allowed, how company data may be used, which information must not go to external services. That is a start — but not enough. A policy without role assignments is an aspiration, not operational governance.

The decisive question is not only “what applies?” but “who decides, who documents, who escalates — and by when?” That is what a roles and responsibility matrix answers: it turns abstract requirements into day-to-day tasks that do not require a meeting for every decision.

The four core duties in detail

The matrix does not need to be complex — it needs to be complete. In practice, four duties cover most situations; each maps to one or more functions with explicit expectations.

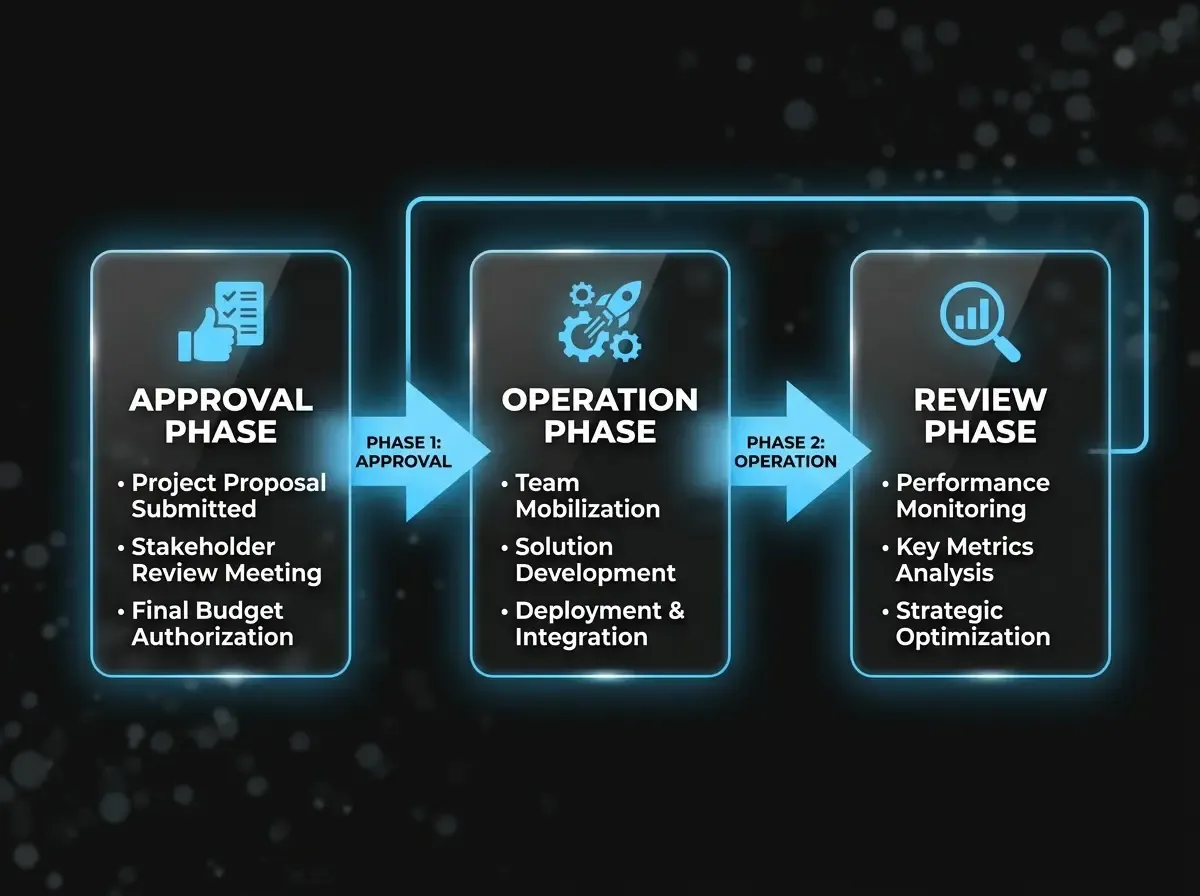

1. Approval: who authorises AI use?

Before a new tool is rolled out or an existing one is expanded, you need structured approval. In mid-sized firms that is typically IT leadership (technical fit and security), the owning business unit (use case fit), and legal or the DPO (GDPR and EU AI Act checks). The DPO alone does not “approve AI” — it is a joint decision the matrix must reflect.

2. Documentation: who records what happened?

Under the EU AI Act and GDPR, accountability is not optional. Which model was used with which inputs? Which decisions were influenced by AI outputs? The business unit primarily owns documentation; IT must provide audit logs and access trails. Without alignment you get gaps that become expensive in an audit.

3. Monitoring: who checks live operation?

AI behaviour shifts over time — new model versions, data drift, context changes. Monitoring belongs with the function that uses the system daily; IT and legal receive periodic reports and step in at defined thresholds. That “traffic light” model avoids both neglect and permanent firefighting.

4. Escalation: who acts when something goes wrong?

Escalation is often least clear — and most critical. On serious errors, suspected data incidents, or regulatory enquiries, the matrix should state who notifies whom, in what order, and within which timeframe — including when leadership is informed.

How the matrix looks in practice

The matrix is not a one-off audit PDF. Each approved AI application gets a row: tool / use case, risk class, owning unit, approval role, documentation cadence, monitoring rhythm, escalation path. Expiry dates per row remind you that approvals are not permanent.

Review the table when tools, models, or data categories change — ideally within two weeks of the change.

Typical pitfalls

- Too many approvers: if five people “own” approval, nobody does. Assign primary ownership and cover absences — do not split first-line accountability.

- Ignoring risk class: an internal HR FAQ chatbot is not governed like credit decision support. Scale duties with risk.

- Legal-only design: if business units never co-created the matrix, it will not be lived. Use a cross-functional workshop.

Clear roles early let you scale AI initiatives faster — because every approval rests on a shared foundation.