The Pilot Trap: Why AI Projects Stall in Mid-Market Companies

A manufacturer in southern Germany launches an AI pilot for automated order processing. After ten weeks the results are impressive: 85% accuracy, enthusiastic feedback from the sales team, engaged sponsors in IT and procurement. Six months later the pilot is still running — isolated, manually maintained, with no integration into the ERP. The project team has moved on to other priorities; executive sponsorship has dissolved.

This scenario is not an exception. Research across consulting firms consistently shows: more than two-thirds of all AI pilots in DACH mid-market companies never reach production. The root cause is rarely technical failure — the models work. The problem is the transition: from the protected pilot environment into the rough daily reality of a running business, with real users, system dependencies, data-privacy requirements, and shifting priorities.

This pattern has a name: the Pilot Trap. Companies have become good at launching pilots. They have become poor at building the structural prerequisites for the production transition. Escaping this trap does not require a better AI model — it requires a better framework for the phases before and after.

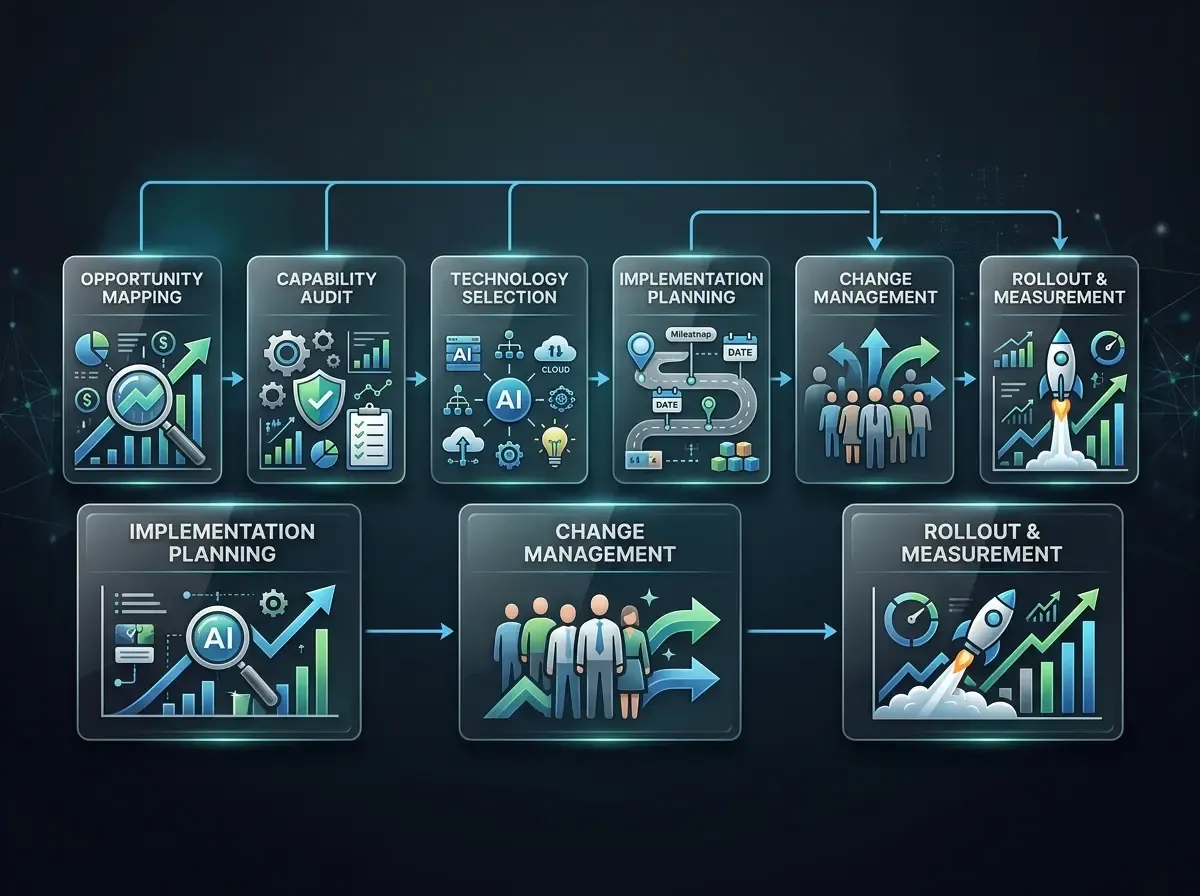

AI Strategy Development for SMEs: The 6-Step Framework

The following framework was built from practice for mid-market companies. It is not a theoretical construct but condensed experience from projects in manufacturing, professional services, and B2B service firms across the DACH region — companies with 50 to 500 employees that deploy AI not as an end in itself, but as a tool for solving concrete operational problems.

Step 1: Use-Case Prioritization — What Comes First?

The most common strategic mistake: companies choose the technically most interesting use case, not the operationally most important one. A good entry-level use case meets three criteria simultaneously: it solves a real, daily pain point (no "nice-to-have"), it can be piloted in 8–12 weeks, and it produces measurable results that can be communicated internally.

Practical approach: run a structured workshop with IT leadership, representatives from two or three business units, and — critically — a member of the executive team. Collect ideas and score them across two dimensions: Impact (how much time, error, or cost does this create today?) and Feasibility (what data is available? which systems need to be connected?). The outcome is a prioritized list — not a consensus compromise, but a clear sequence.

Typical quick-win use cases for SMEs: automated document capture and classification, AI-driven email triage and routing, intelligent order recommendations based on historical data, internal knowledge base with natural-language search.

Step 2: Stakeholder Alignment — Who Needs to Be on Board?

AI projects rarely fail because of technology. They fail because of missing support from the right people at the right time. Stakeholder alignment does not mean everyone is enthusiastic — it means the critical people know the direction, their concerns have been addressed, and there is a clear mandate.

Three roles are non-negotiable: an Executive Sponsor at leadership level who secures priority and budget; a Business Unit Champion who defends the pilot in day-to-day operations and channels user feedback; and a Technical Owner (often IT lead or lead developer) who owns system integration and data privacy. Without these three roles, a production rollout is structurally impossible.

Step 3: Data and System Assessment — What Is Actually Available?

The most underestimated step. Companies systematically overestimate the quality and availability of their own data. Before the pilot, an honest data assessment is essential: what data exists? In what quality? Who may access it? Which systems need to be connected, and at what cost?

Specific checkpoints: is the relevant data structured or unstructured? Does it reside in one system or distributed across multiple sources? Is there a GDPR-compliant legal basis for AI-purpose use? What API interfaces or export options do the source systems (ERP, CRM, DMS) offer? A realistic data profile prevents costly surprises in week six of the pilot.

Step 4: Structure and Measure the Pilot

A well-structured pilot is not an open-ended experiment — it is a time-boxed feasibility test with defined success criteria. Before you start, establish: what constitutes success? Which metrics will be tracked? What is the baseline (manual effort today)? What are the abort criteria?

Recommended pilot duration: 8–12 weeks. Shorter provides insufficient data; longer increases the risk that the project drifts into "eternal beta." Measure not only technical KPIs (accuracy, latency) but — more importantly — operational KPIs: staff-hours saved per week, error rate versus the manual process, business-unit user satisfaction.

Step 5: Change Management for the Production Rollout

This is the step missing or arriving too late in 80% of failed projects. Change management for AI means not informing employees at go-live but involving them from the start of the pilot. Communicate transparently what the AI takes over — and what it does not. Treat training not as a one-off obligation but as an ongoing process.

Common sources of resistance and their root causes: fear of job displacement (rarely stated openly, but shapes behavior); skepticism toward recommendations that cannot be explained; undisclosed extra workload during the transition period. All three are solvable — but only if actively addressed.

Proven in practice: identify 2–3 power users in the business unit who actively accompany the rollout and serve as the first point of contact for colleagues. These individuals are not additional overhead — they are the critical transmission belt between technology and operations.

Step 6: Scaling and Continuous Optimization

The first successful rollout is not an endpoint — it is the foundation for genuine scaling. Now is the time to preserve the lessons learned: which process steps proved AI-suitable? Which data problems had to be resolved, and how can those solutions transfer to other areas? Which governance structures worked?

Scaling for SMEs does not necessarily mean "more AI everywhere." It means: strategically identify the next two or three use cases that build on the groundwork of the first project. A successful document-processing project delivers the data infrastructure for an intelligent order recommendation engine. A successful email-routing system lays the groundwork for a complete customer-service workflow.

Measure continuously — not just technical metrics, but operational impact. Quarterly reviews with the Executive Sponsor and Business Unit Champion keep the project in strategic focus and prevent AI initiatives from drifting back into "eternal pilot" status. Developing AI strategy for SMEs is a marathon, not a sprint.