The Technology-First Trap: When the Pilot Works but Nobody Uses It

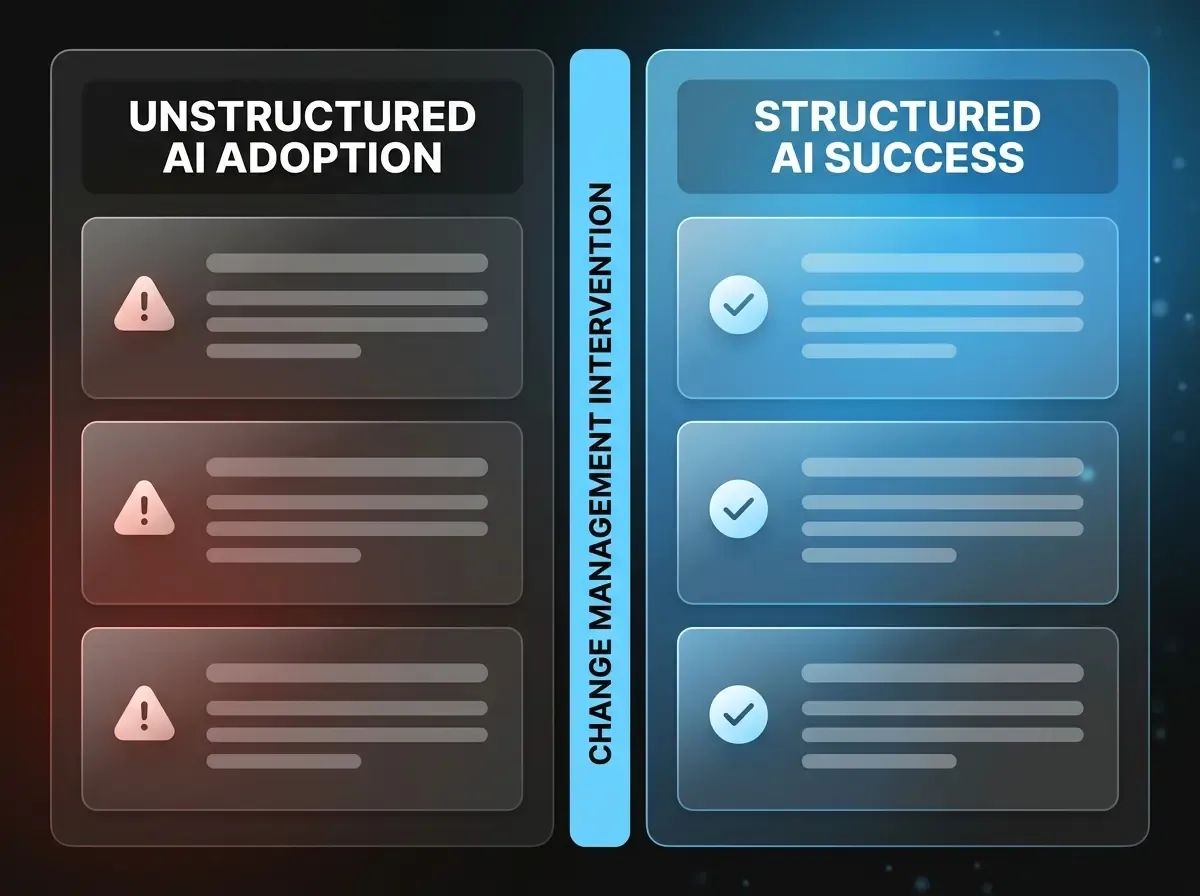

A mid-sized logistics company in North Rhine-Westphalia launches an AI-powered dispatch planning system after five months of development. The controlled test results are compelling: 22% shorter planning times, fewer manual corrections, a clean interface with the existing TMS. Three months after go-live, the active utilisation rate stands at 18%. Dispatchers are working in parallel with the system — double the effort, half the benefit.

This pattern repeats across industries and company sizes with alarming regularity. Technically, the system is built correctly. Users have been briefed. And yet: the old way of working survives. Not because it is better, but because it is familiar — and because no one seriously ensured that the new way of working would actually be lived.

AI change management in SMEs is not a supporting measure or a "soft-skill add-on" to a technical rollout project. It is the prerequisite for a functioning system to produce a functioning operation. Underestimating this means financing technology whose ROI is never realised.

AI Change Management for SMEs: The Three Roots of Resistance

Before a company plans change management measures, it is worth taking a clear-headed look at the actual causes of user resistance. Three patterns occur disproportionately often — and each has specific solutions.

Root 1: Fear of Losing Control

The most frequently cited, yet rarely openly voiced concern. Employees do not primarily fear being replaced — they fear losing control over their own work. When an AI system makes a recommendation that an employee cannot follow, a dilemma arises: if they follow the recommendation, they take responsibility for a decision they don't understand. If they ignore it, productivity suffers.

The solution lies not in technical explainability alone, but in organisational communication: clear responsibility rules — the AI recommends, the human decides — must be stated explicitly, in writing, and reinforced repeatedly. This boundary-setting is not a disclaimer — it is the foundation of acceptance.

Root 2: Opacity of Benefits

Leadership teams often communicate AI projects from a strategic perspective: "We're increasing efficiency," "We're reducing errors by X percent." This is relevant to decision-makers — but usually not to the clerk who works with the system every day. What matters to them: Will I have more or less effort tomorrow? Will my work become easier or more complicated? Will I still be needed in three years?

Change communication must explicitly address these questions — at the level of the individual role, not the company. "The AI handles pre-sorting so you can focus on the cases where your judgement really counts." That is not marketing — it is the information that creates acceptance.

Root 3: Transition Overhead Without Communication

Every new system creates additional effort in the first few weeks. Parallel operations, unfamiliar interfaces, missing routines — all of this takes time that employees don't have. If this overhead is not communicated in advance and framed as temporary, a self-fulfilling prophecy emerges: the new system is "too much effort," and the old ways are maintained.

The solution is an explicit transition phase with a communicated end date and measurable relief thereafter. "For the first four weeks you'll work in parallel. From week five, manual pre-sorting is eliminated entirely — that's 45 minutes returned to you every day." Concrete promise, concrete date, measurable delivery.

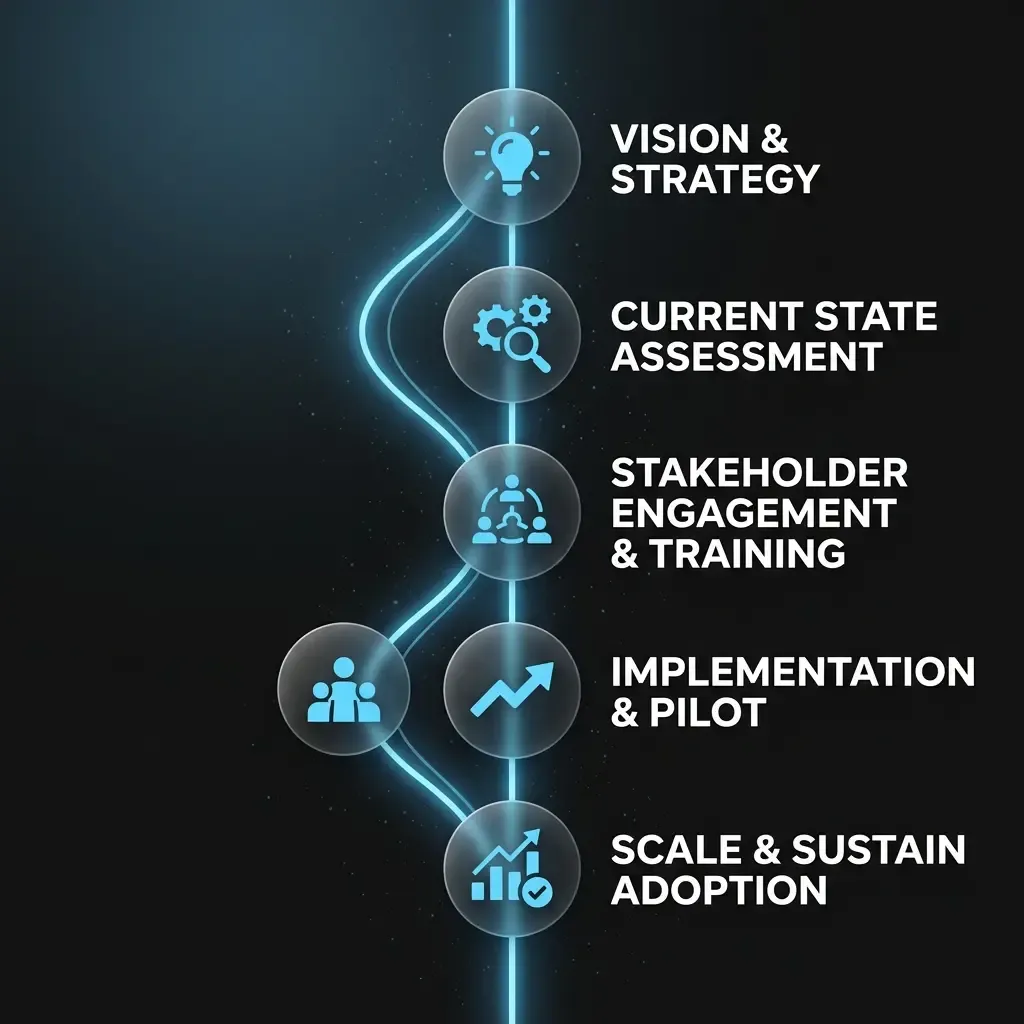

The Four-Phase Framework for AI Change Management in SMEs

The following framework was developed from practice for companies with 50–500 employees — without a dedicated change management department, with limited HR budgets and under day-to-day operational pressure. It picks up where most rollout projects leave off: after the technical go-live.

Phase 1: Framing — Context Before the First Meeting

Change doesn't begin on go-live day — it begins the moment employees first hear about the project. This first contact determines the emotional baseline: threat or opportunity? Secret project or transparent initiative?

Concrete measures in this phase: a personal announcement by the direct line manager (not an email from the IT manager) with clear answers to the three most important employee questions: What changes for me? What stays the same? When and how will I be involved? The announcement should ideally take place 6–8 weeks before the pilot starts — not just before.

Common mistake: the project is only communicated when it is "ready" — i.e., shortly before go-live. This creates distrust, not trust. Employees wonder why it took so long, and interpret that as a signal that their opinion was not sought.

Phase 2: Activating Champions

No leadership team and no IT department can manage change in day-to-day operations alone. The decisive lever is internal champions — employees who are perceived by their colleagues as competent and trustworthy, and who actively model the new way of working.

Recommendation: appoint 2–3 Power Users per affected department, who are involved early in the pilot, receive extended system access and act as the first point of contact for their colleagues. This role is voluntary, visibly recognised (e.g. mentioned at team meetings) and clearly time-limited.

Why does this work? Because peer communication is more effective than top-down communication in change processes. A colleague saying "I've been using it for four weeks and it's saved me X hours per week" is more persuasive than any management presentation.

Phase 3: Phase-Accompanying Training Instead of One-Off Sessions

The classic training model — two-hour introduction, then it runs — doesn't work for AI systems. The reason lies in the nature of learning: without immediate application, 70% of training content fades within 48 hours. With AI tools, the first encounter with real errors and unexpected recommendations is decisive for trust in the system.

Recommended structure: a short foundational training (max. 90 minutes) immediately before the first day of use, followed by two 30-minute feedback rounds in weeks 2 and 4. These rounds are not training sessions in the traditional sense, but structured exchange formats: What worked? What was unclear? Which recommendations did you not follow through on?

The insights from these feedback rounds feed directly into system configuration — and that is the real value. Employees see that their feedback changes the system. This is the strongest commitment signal a company can send.

Phase 4: Measuring and Steering Adoption

What isn't measured isn't managed. AI adoption is not a felt state — it is a measurable process. Companies that rely exclusively on general employee satisfaction overlook structural usage barriers that remain invisible in day-to-day operations.

Three metrics proven in practice: Feature utilisation rate — how many of the available functions are actively used? Low values indicate disorientation. Override rate — how often are AI recommendations manually overridden? High values indicate trust issues addressable through feedback rounds. Task completion time — how long do users need for a defined task compared to the baseline? This is the most direct measure of operational benefit.

These metrics are reviewed quarterly in the Executive Sponsor review. Deviations from target values trigger concrete measures — not general concern, but specific interventions. AI change management in SMEs is a continuous process, not a one-time onboarding project.